The standoff between the Pentagon and Anthropic over integrating artificial intelligence into military operations and setting limits on its use reached a peak this week, with Secretary of Defense Pete Hegseth giving the AI company until 5:01 p.m. ET on Friday to make concessions to the government’s demands. Humanity is not slowing down, at least for now, but the battle between the military and industry over AI is just beginning. The Pentagon is at odds with private companies managing AI in ways untested since World War II.

Anthropic on Thursday rejected Defense Secretary Pete Hegseth’s request to relax certain safety measures on models for military use, such as domestic mass surveillance and fully autonomous weapons, saying it violates the company’s policies. Chief Executive Dario Amodei’s decision comes after the Pentagon warned that the partnership could be terminated if the company refuses to support “all lawful uses.”

“It is the department’s prerogative to select the contractor that best aligns with its vision,” Amodei said in a statement Thursday. “But given the tremendous value that Anthropic’s technology brings to our nation’s military, we hope they reconsider.”

The conflict highlights the new reality that private companies developing frontier AI may seek to impose their own limits on how the technology can be deployed, even in the context of national security.

In July, the Department of Defense awarded contracts worth up to $200 million each to Anthropic, OpenAI, Google DeepMind, and Elon Musk’s xAI to develop prototype frontier AI capabilities related to U.S. national security priorities. These awards demonstrate how aggressive the Department of Defense is in bringing cutting-edge commercial AI to defense operations.

This urgency is also reflected in the Department of Defense’s internal planning. A Jan. 9 memorandum outlining the military’s artificial intelligence strategy calls for the United States to become an “AI-first” combat force and accelerate the integration of major commercial AI models across combat, intelligence, and enterprise operations.

“There are no winners in this,” Lauren Kahn, a senior research analyst at Georgetown’s Center for Security and Emerging Technologies, told CNBC about the conflict between the Pentagon and humanity in a recent interview. “It leaves a sour taste in everyone’s mouth.”

But what is actually happening marks a shift, a departure from decades of defense innovation in which governments themselves controlled the creation of technology.

“For most of the period since World War II, the U.S. government has defined the frontiers of advanced technology,” said Rear Admiral Lorin Selby, former chief of naval research. “Governments set requirements and funded basic research, and industry ran to government-driven specifications. From nuclear propulsion to stealth to GPS, the state was the primary driver of discovery, and industry was the integrator and manufacturer.”

Selby says AI has turned that model on its head.

“Today, the commercial sector is the primary driver of frontier capabilities. Private capital, global competition, and the scale of commercial data are advancing AI at a pace that traditional government research and development structures cannot easily replicate. The Department of the Army is no longer defining the limits of what is technically possible in artificial intelligence, but adapting to them,” he said.

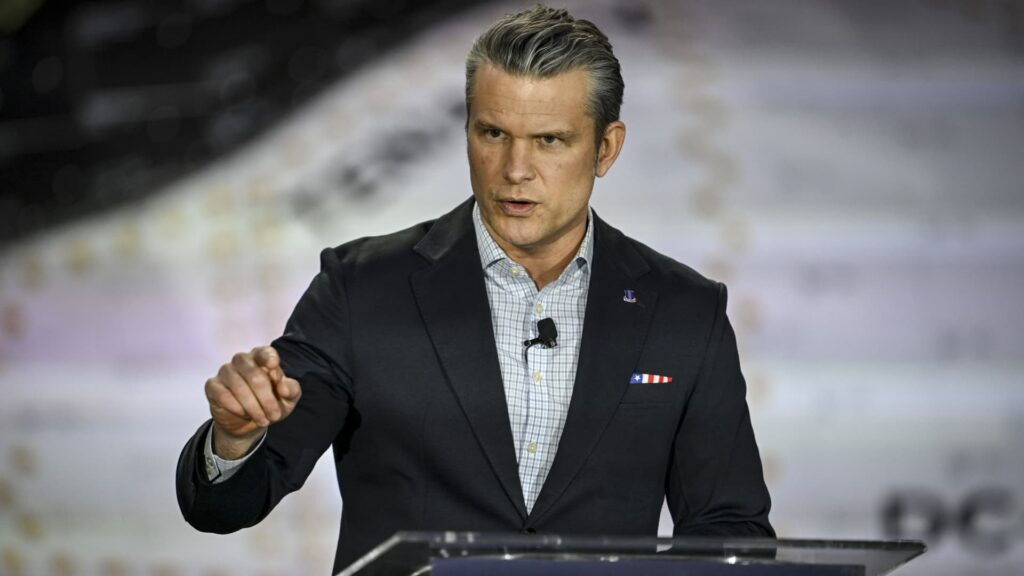

U.S. Army Secretary Pete Hegseth speaks during a visit to Sierra Space, Monday, February 23, 2026, in Louisville, Colorado.

Aaron Hontiveros | Denver Post | Getty Images

This reversal of the balance of power over technology comes with both opportunities and risks.

“We should never be in a situation where private companies feel like they have influence over the U.S. government or our Western allies because of the technological capabilities they provide,” said Joe Scheidler, former White House deputy director and special adviser and co-founder and CEO of AI startup Helios. “Technologists need to be responsible for building it and running it, but the government should be the one making the decisions.”

Anthropic and the Department of Defense did not respond to requests for comment.

Why the military needs civilian AI

Public-private partnerships have long supported U.S. defense innovation, from World War II industrial mobilization to modern aerospace and cybersecurity programs. But artificial intelligence is different. Because cutting-edge capabilities are increasingly concentrated in commercial companies rather than government labs.

“Strong public-private partnerships give the United States an advantage,” Scheidler said. “You won’t find a more dynamic and innovative talent pool than America’s entrepreneurial community. The idea of trying to replicate that level of innovation within government itself…is difficult.”

This focus is precisely why governments seek partnerships, but Selby says that dependence is largely driven by speed. “Innovation cycles for venture-backed companies run in months. Traditional acquisition cycles run in years. Without commercial AI providers, governments would be slower, less adaptable, and much more expensive,” he said.

When critical national security tools are developed by private companies, “the main change is that the government no longer has complete control over the development of cutting-edge technological tools,” said Betsy Cooper, president of the Aspen Policy Academy and former general counsel for the U.S. Department of Homeland Security.

Because commercial AI systems are typically built first for broad markets rather than military missions, there can be a gap between how companies design the technology and how governments want to deploy it, Cooper said.

The misalignment can become more pronounced when corporate policy, reputational concerns, or pressure from global customers conflict with government goals, a dynamic that is currently visible in the Humanity Debate.

“Companies may not want to risk a negative reaction from their customer base if their product is used for highly controversial reasons, such as developing autonomous lethal weapons or pre-emptively killing a crime before it is committed,” Cooper said.

Government has long-term influence

Despite the shift to commercial technology, defense leaders are unlikely to relinquish control of mission-critical systems.

“The first thing to understand is, from what we’ve seen so far, the Department of Defense has no intention of relinquishing ultimate control,” said Brad Harrison, founder of Scout Ventures, an early-stage venture capital firm that invests at the intersection of national security and critical technology innovation. “Governments still want to understand everything involved, all the dependencies and risks.”

Harrison, a former U.S. Army Airborne Ranger and West Point graduate, said that “governments are going to be very careful about how they let AI interact with these data layers” because AI could ultimately impact decisions such as how to intercept incoming threats. “No one wants to be in charge of Skynet,” he said, referring to the fictional AI from the Terminator universe that sparked nuclear war.

Governments also hold powerful tools to influence companies, including procurement decisions, export controls, and regulatory authorities. “Government has a lot of influence,” Harrison said. “If you don’t want to work with them, they have a lot of ways to make it a very difficult decision,” he added.

But Selby says, at least for now, leverage is flowing in both directions. “In the short term, companies with rare AI talent and unique models could have the most influence. In the long term, sovereign governments will retain regulatory power, contracting power, funding scale and, if necessary, legal enforcement,” he said.

In Selby’s view, the most important question is “whether we can build durable public-private agreements that treat AI as fundamental national security infrastructure and not just a vendor relationship.”

Risks in the military and Silicon Valley’s new industrial complex

In the end, experts say, the question is less about whether companies or governments hold lasting influence, and more about how the relationship will evolve as AI becomes central to state power.

“By building collaboration and resilience in public-private relationships, AI can strengthen national security while sustaining innovation,” Selby said. “If we fail to do this, we risk a future where capabilities are abundant but coordination is weak,” he added.

The emerging military and Silicon Valley industrial complex presents many new forms of risk. For example, relying on externally developed AI can create vulnerabilities if the system unexpectedly fails or becomes unavailable, especially if military forces become accustomed to the AI during operations.

“Overreliance can be deadly,” said Shanka Jayasinghe, founder of Onto AI, which develops AI tools for military, healthcare, financial institutions, and enterprise solutions, describing a scenario in which special operations forces rely on AI-enhanced mission coordination tools during deployments. If these systems fail after long-term use, “many lives will be at risk,” he said.

Vendor lock-in is also a concern. Once AI platforms are integrated into workflows, they can be difficult to replace. “At the current rate of advancement in AI, it will be difficult to unseat the incumbent,” Jayasinghe said.

But Harrison says one risk the Pentagon is not exposed to is becoming captive to a single company. “The U.S. government is not going to rely on one company in Silicon Valley,” he said. “They will be very methodical in testing the system, controlling the data layer, and moving forward in stages.”

One approach is to build what some technologies call “sovereign AI architectures” – systems designed to allow governments to benefit from commercial innovation while maintaining independence from vendors.

“We talk a lot internally about the concepts of sovereign information and vendor independence,” Scheidler said, arguing that the U.S. ecosystem remains broad enough to prevent over-reliance on a single provider. “New ideas are born every day, and we don’t need to rely on one vendor to make them a reality,” he said.